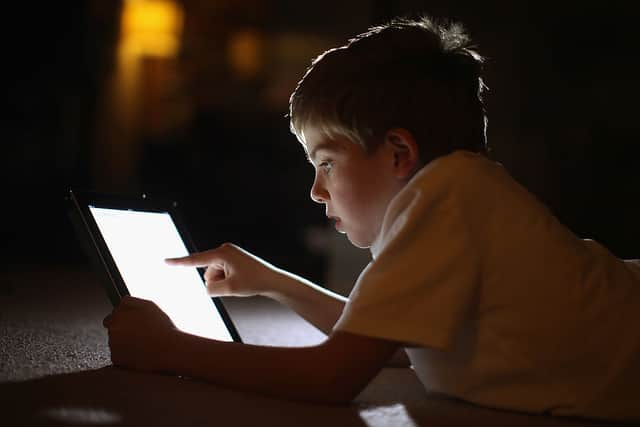

Online Safety Act becomes law in the UK, introducing new internet safety rules to 'protect children'

and live on Freeview channel 276

After a lengthy and controversial journey through Parliament, the government's Online Safety Act, which aims to make the internet safer for children, has become law.

The tougher new rules for online spaces were officially brought into effect on Thursday (26 October), when the legislation received royal assent. It means that social media platforms will now be required to ban and rapidly remove illegal content - such as child sex abuse, terrorism, and animal cruelty - and prevent children from seeing "harmful" material such as bullying or self-harm content.

Advertisement

Hide AdAdvertisement

Hide AdSocial media sites will also be required to give adults more control over what they see online, by offering clear and accessible ways for users to filter content and report problems. Those who fail to comply will face fines up to £18 million, or 10% of annual global revenue, meaning potentially billions of pounds for the biggest companies, like Meta and X, formerly Twitter.

In the most extreme cases, tech and social media bosses could even face criminal convictions if they do not fulfil their safeguarding duties, with up to two years in prison on the cards.

Technology Secretary Michelle Donelan said the Online Safety Act meant the UK would become "the safest place to be online in the world." She continued: "The [Act] protects free speech, empowers adults, and will ensure that platforms remove illegal content. At the heart of this [Act], however, is the protection of children."

Meanwhile, Baroness Kidron, a key supporter of the legislation, told NationalWorld: "I am delighted that the Online Safety Act is now law. I congratulate the Department of Science, Innovation, and Technology, and pay tribute to the many organisations and individuals that have played a part, in particular the broad coalition of children’s charities and the Bereaved Families for Online Safety. The wisdom and advocacy of these groups has made for a much better law.

Advertisement

Hide AdAdvertisement

Hide Ad"The mantel of responsibility for child online safety now falls firmly on the shoulders of the tech sector who under the watchful gaze of Ofcom must use the Online Safety Act to make meaningful changes to children’s online experiences. This is just one small step toward building the digital world children deserve."

The newly-passed law comes at a time when there is increasing concern for the safety of children who are using the Internet. On Wednesday (25 October), the Internet Watch Foundation (IWF) warned that its "worst nightmares" about artificial intelligence (AI) are coming true, as it revealed it had found thousands of AI-generated child sex abuse images on a dark web forum.

The National Crime Agency also previously told NationalWorld that the sexual abuse of children in online spaces is "increasing in scale, severity, and complexity", as it estimated that there are more than half a million people in the UK who post a sexual risk to children. At the time, the force also argued that social media companies were "not doing enough" to protect children, and urged ministers to ensure the Online Safety Bill became law.

However, there were also those who pushed against the Online Safety Bill (now Online Safety Act), arguing that it infringed upon people's right to privacy. WhatsApp threatened to stop offering its app in the UK if the legislation passed, with boss Will Cathcart saying he would refuse to weaken its end-to-end encryption system (which makes messages readable only to those who have sent and received them).

Advertisement

Hide AdAdvertisement

Hide AdRegulator Ofcom will be given powers to enforce the new rules, something it will set out in the coming weeks. The government said that the majority of the Online Safety Act's provisions would commence in two months' time, but that Ofcom's work on tackling illegal content would begin immediately.

Ofcom chief executive Dame Melanie Dawes said: "These new laws give Ofcom the power to start making a difference in creating a safer life online for children and adults in the UK. Ofcom is not a censor; our new powers are not about taking content down. Our job is to tackle the root causes of harm: we will set new standards online, making sure sites and apps are safer by design."

What will the Online Safety Act do?

The Online Safety Act will force social media companies and tech firms to protect children from some legal but harmful material, such as by introducing age verification processes to stop children accessing pornography.

Platforms will also need to show they are committed to removing illegal content, such as:

Advertisement

Hide AdAdvertisement

Hide Ad- Child sexual abuse

- Terrorism

- Extreme sexual violence

- People smuggling

- Revenge pornography

- Animal cruelty

- Selling illegal drugs or weapons

- Controlling or coercive behaviour

The law also introduces new offences, such as cyber-flashing and the sharing of 'deepfake' pornography, and includes measures which make it easier for bereaved parents to obtain information about their children from social media firms.

Sir Peter Wanless, chief executive of the NSPCC, which has repeatedly called for the Online Safety Act to become law, said today was a "watershed" moment. He continued: "The NSPCC will continue to ensure there is a rigorous focus on children by everyone involved in regulation. Companies should be acting now, because the ultimate penalties for failure will be eye-watering fines and, crucially, criminal sanctions."

Comment Guidelines

National World encourages reader discussion on our stories. User feedback, insights and back-and-forth exchanges add a rich layer of context to reporting. Please review our Community Guidelines before commenting.